This website uses cookies to improve your experience. We\'ll assume you\'re ok with this, but you can opt-out if you wish. Read More

Human observation and manual annotation has been the “gold-standard” in the behavioral sciences since their inception. Thanks to advances in audio processing and machine learning, these behaviors can now be automatically measured from signals. Automatic detection of behavioral and emotional cues has created new applications and use-cases across behavioral health, including autism spectrum disorder, addiction counseling, PTSD, Parkinson’s, depression, and couple’s therapy. What follows are two applications of how BSP has been applied to healthcare-related pilots.

Detecting Blame in Couples’ Therapy

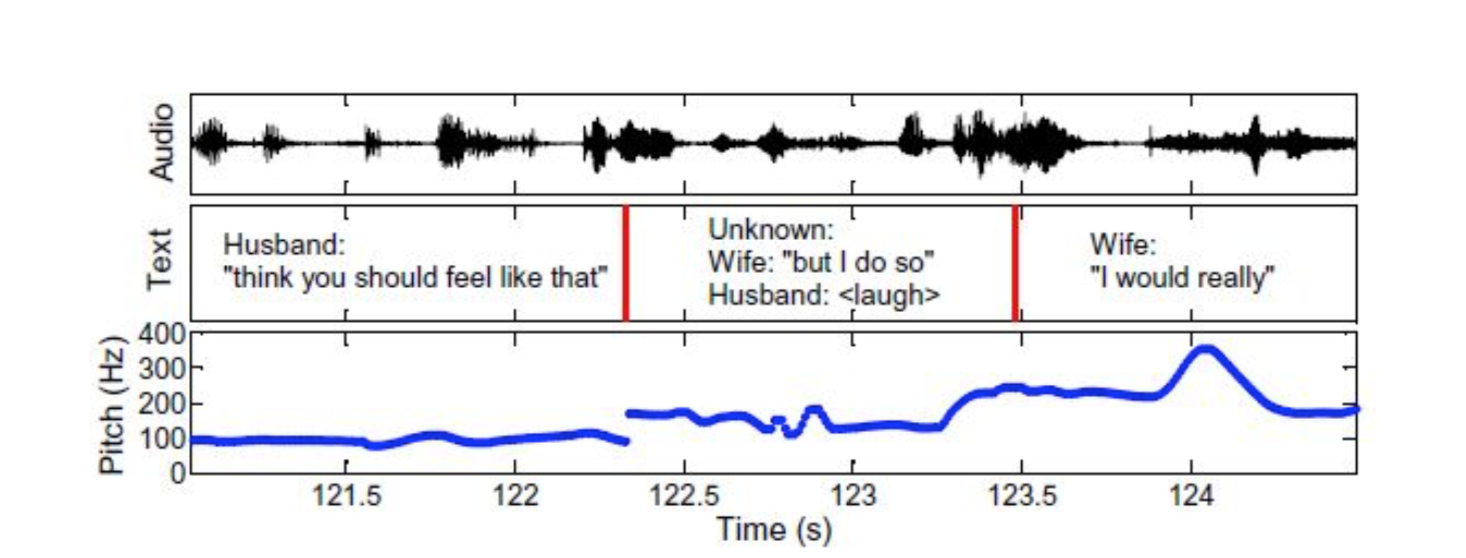

Consider for example a couple visiting a marriage counselor to rebuild a healthy relationship. Researchers at SAIL were able to use audio recording of couples discussing contentious topics in marriage counseling sessions to automatically decipher if one spouse was blaming the other. These types of behaviors lead to more negative relationships and a higher likelihood of divorce. Directly from the audio (e.g., intonation, volume, and affect), the researchers could identify blaming behavior with 74% accuracy; intonation alone was able to reach 70% accuracy. In addition, when converting audio to text via speech-to-text and then analyzing language use in addition to audio cues, the authors achieved 79% accuracy. Intuitively, spouses who speak about each other as a team (“ we ”) or about their own behavior (“ I ”) are speaking with less blame than those who are concerned with what “you” do that affects “me” (see Table 1). This is a recurring observation in all use-cases and pilots discussed here. How things are being said carries a tremendous amount of information, equally important to “what” is being said. When combining the how with the what one can predict behaviors and emotions with unprecedented accuracy (see Fig. 2).

Quantifying Emotional Speech Behavior of Children with Autism

We know that when we are interacting, one person’s emotion should influence the other person’s. It would be quite awkward if person A were very excited about some news, and person B stayed monotone and didn’t share in that excitement; that would actually indicate a poor interaction! In autism spectrum disorder, this is exactly the kind of thing that can happen; autism is a social-communicative disorder in which ability to interact and engage in conversation is reduced or non-existent. This is partially because many children with autism do not pick up on or express emotional cues as expected. While clinicians perceive this behavior and describe it qualitatively, they have not had reliable ways of quantifying it. Researchers at SAIL were able to quantify the emotional exchange between child and psychologist, looking at the degree to which each person responded to the other’s changes in emotion as the interaction unfolded.4

The “how” of speech also includes the pitch, loudness, rate, rhythm, and intonation, known collectively as speech prosody. For children with autism, speech prosody is often described as flat, or robotic, and not coordinated with the intent of the message. A variety of methods to quantify speech prosody and actually put numbers on the “felt-sense” perceptions of clinical researchers. These methods can be used in speech interfaces that track progress over time or assistive devices. Intriguingly, the researchers were able to determine the child’s autism severity from looking at the interacting psychologist’s behavior, since the psychologist must respond to the child’s actions.5 Word choice, time to respond, and even the amount of speech were all critical variables that quantified the interaction. These same audio-derived features have value for many other verticals and use-cases.

2 “Toward automating a human behavioral coding system for married couples’ interactions using speech acoustic features.” Matthew P. Black et al. Speech Communication, vol. 55, no. 1, pp. 1-21, January, 2013.

3 “That’s aggravating, very aggravating’: Is it possible to classify behaviors in couple interactions using automatically derived lexical features?” Panayiotis G. Georgiou et al. Proceedings of ACII, pp. 87-96, October, 2011.

4 “An investigation of vocal arousal dynamics in child-psychologist interactions using synchrony measures and a conversation-based model.” Daniel Bone, Chi-Chun Lee, Alexandros Potamianos, and Shrikanth S. Narayanan. Proceedings of Interspeech, pp. 218-222. September, 2014.

5 “The psychologist as an interlocutor in autism spectrum disorder assessment: Insights from a study of spontaneous prosody.” Daniel Bone et al. Journal of Speech, Language, and Hearing Research, vol. 57(4), pp. 1162-1177. 2014.

Whitepaper on Behavioral Signal Processing

Table 1. Most pro-blame and anti-blame words used by couples. 3

Blaming

Not Blaming

You

Your

Me

Um

I

We

Fig.2 Computing meaningful information from “how” words are uttered. 2

Discover more on Behavioral Signals Processing in

Read more

PAGES:

1 – Behavioral & Emotional Analytics | 2 – BSP in Healthcare | 3 – BSP for Media & Movie Analytics | 4 – BSP for Home Assistants & Robotics | 5 – BSP for Contact Centers | 6 – BSP for Organizational Behavior | 7 – Behavioral Signal Processing Pipeline: How it all works | 8 – Our Platform: callER & textER | 9 -The Challenges of Quantifying Behavior from Signals